- Jeff Doyle on the need for IPv6 (posted 2003-08-03)

In an

article for the Australian Commsworld site, Juniper's Jeff Doyle explains his views on the need for IPv6. He has little patience with the scare tactics some IPv6 proponents use, but rather argues that IPv6 is simply progress and it will be cheaper to administrate in the long run.

- SANS BGP security paper (posted 2003-08-03)

The SANS (SysAdmin, Audit, Network, Security) Institute has published

Is The Border Gateway Protocol Safe?.

a paper (PDF) on different security aspects of BGP. Nothing groundbreaking, but detailed an a good read for people with a sysadmin background.

- Worm impact on BGP (posted 2003-08-03)

Yet another worm analysis article:

Effects of Worms on Internet Routing Stability

on the SecurityFocus site. This one covers Code Red, Nimda and the SQL worm. No prizes for guessing which had the most impact on the stability of the global routing infrastructure.

Yes, it's old news, unlike

Cisco's latest vulnerability

(which just seems like very old news) but this stuff isn't going away and we have to deal with it. Are your routers prepared for another one of these worms?

- Interdomain Routing Validation (IRV) (posted 2003-08-10)

At the

Network and Distributed System Security Symposium 2003

(sponsored by the NSA), a group of researchers from

AT&T Labs Research

(and one from Harvard)

presented a new approach to increasing interdomain routing security. Unlike Secure BGP (S-BGP) and Secure Origin BGP

(soBGP), this approach carefully avoids making any changes to BGP. Instead, the necessary processing is done on

an external box: the Interdomain Routing Validator that implements the Interdomain Routing Validation (IVR) protocol.

The IRV stays in contact with all BGP routers within the AS and holds a copy of the AS's routing policy. The idea is that IRVs from different ASes contact each other on reception of BGP update messages to check whether the update is valid.

See the paper

Working Around BGP: An Incremental Approach to Improving Security and

Accuracy of Interdomain Routing

in the NDSS'03 proceedings for the details. It's a bit wordy at 11 two column pages (in PDF), but it does a good job

of explaining some of the BGP security problems and the S-BGP approach in addition to the IRV architecture.

This isn't a bad idea per se, however, the authors fail to address some important issues. For instance, they don't discuss

the fact that routers only propagate the best route over BGP, making it impossible for the IVR to get a complete view of

all incoming BGP updates. They don't discuss the security and reliability implications of having a centralized service for finding the IRVs associated with each AS. Last but not least, there is no discussion of what exactly happens when invalid BGP information is discovered.

The fact that that peering policies are deemed potentiallly "secret" more than once also strikes me as odd. How exactly are ISPs going to hide this information from their BGP-speaking customers? Or anyone who knows how to use the traceroute command, for that matter?

Still, I hope they'll bring this work into the IETF or at least one of the fora where interdomain routing operation is

discussed, such as NANOG or RIPE.

- What is wrong with this picture (DOS and worms) (posted 2003-08-14)

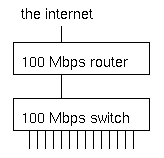

What's wrong with this picture?

What's wrong with this picture?

Under normal circumstances, not much. But when several machines are infected with an aggressive worm or participating

in a denial of service attack when an attacker has compromised them, the switch will receive more traffic from the hosts that are connected to the 100 Mbps ports than it can transmit to the router, which also has a 100 Mbps port.

The result is that a good part of alll traffic is dropped by the switch. This doesn't matter much to the abusive hosts, but the high packet loss makes it very hard or even impossible for the other hosts to communicate over the net. We've seen this happen with the MS SQL worm in january of this year, and very likely the same will happen on august 16th when machines infected with the "Blaster" worm (who comes up with these silly names anyway?) start a distributed denial of service attack on the Windows Update website. Hopefully the impact will be mitigated by the advance warning.

So what can we do? Obviously, within our own networks we should make sure hosts and servers aren't vulnerable, not running and/or exposing vulnerable services and quickly fixing any and all infections. However, for service or hosting providers it isn't as simple, as there will invariably be customers that don't follow best practices. Since it very close to impossible to have a network without any places where traffic is funneled/aggregated, it's essential to have routers or switches that can handle the full load of all the hosts on the internal network sending at full speed and then filter this traffic or apply quality of service measures such as rate limiting or priority queuing.

This is what multilayer switches and layer 3 switches such as the Cisco 6500 series switches with a router module or Foundry, Extreme and Riverstone router/switches can do very well, but these are obviously significantly more expensive than regular switches. It should still be possible to use "dumb" aggregation switches, but only if the uplink capacity is equal or higher than the combined inputs. So a 48 port 10/100 switch that connects to a filter-capable router/switch with gigabit ethernet, could support 5 ports at 100 Mbps and the remaining 43 ports at 10 Mbps. In practice a switch with 24 or 48 100 Mbps ports and a gigabit uplink or 24 or 48 10 Mbps ports with a fast ethernet uplink will probably work ok, as it is unlikely that more than 40% or even 20% of all hosts are going to be infected at the same time, but the not uncommon practice of aggregating 24 100 Mbps ports into a 100 Mbps uplink is way too dangerous these days.

Stay tuned for more worm news soon.

- Power problems (posted 2003-08-24)

The power outage in parts of Canada and the US a little over a week ago didn't cause too many problems network-wise.

(I'm sure the people who were stuck in elevators, had to walk down 50 flights of stairs or walk from Manhattan

to Brooklyn or Queens have a different take on the whole thing.) The phone network in general also experienced

problems, mostly due to congestion. The cell phone networks were hardly usable.

There was a 2% or so decline in the number of

routes in the global routing table. The interesting thing is that not all of the 2500 routes dropped off the net

immediately, but some did so over the course of three hours. This would indicate depleting backup power.

See the report by

the Renesys Corporation for more details.

Following the outage there was (as always) a long discussion on NANOG where several people expressed surprise

about how such a large part of the power grid could be taken out by a single failure. The main reason for this is the

complexity in synchronizing the AC frequencies in different parts of the grid. Quebec uses high voltage direct current

(HVDC) technology to connect to the surrounding grids, and wasn't affected.

In the mean time the weather in Europe has been exceedingly hot. This gives rise to cooling problems for many

power plants as the river water that many of them use gets too warm. In Holland, power plants are allowed to increase

the water temperature by 7 degrees Celsius with a maximum outlet temperature of 30 degrees. But the input

temperature got as high as 28 degrees in some places. So many plants couldn't work at full capacity (even with

temporary 32 degree permits), while electricity demand was higher than usual, also as a result of the heat.

(The high loads were also an important contributor to the problems in the US and Canada as there was little

reserve capacity in the distribution grids.) The plans for rolling blackouts were already on the table when the weather got cooler over the last week.

Moral of these stories: backup power is a necessity, and batteries alone don't hack it, as the outage lasted for

three days in some areas.

- Worms (posted 2003-08-24)

The worm situation seems to be getting worse, but fortunately worm makers fail to exploint the full potential

of the vulnerabilities they use. For instance, we were all waiting to see what would happen to the

Windows Update site when the "MS Blaster" worm was going

to attack it on august 16th. But nothing happened. I read that Microsoft took the site offline to avoid problems,

having no intention to bring it back online again, but obviously this information was incorrect because they're

(back?) online now.

However, Microsoft received help from another worm creator who took it upon him/herself to fix the security hole that

Blaster exploits, by first exploiting the same vulnerability, then removing Blaster and finally downloading Microsoft's patch.

But in order to help scanning, the new worm (called "Nachi") first pings potential target, leading to huge ICMP floods in some

networks, although others didn't see much traffic generated by the new worm. Nachi uses an uncommong ICMP echo

request packet size of 92 bytes, which makes it possible to filter the worm without having to block all ping traffic. See

Cisco's recommendations.

It seems TNT dial-up concentrators have a hard time handling this traffic and reboot periodically. The issue seems to

be lack of memory/CPU to cache all the destinations the worm tries to contact, just like what happened with the MS SQL

worm earlier this year and others before it.

Then there's the Sobig worm. This one uses email to spread, which has two advantages: the users actually see the worm

so they can't ignore the issue and the network impact is negligible as the spread speed is limited by mail server

capacity. Unfortunately, this one forges the source with email addresses found on the infected computer, which means

"innocent bystanders" receive the blame for sending out the worm. Interestingly enough, I haven't received a single copy

of either this worm or its backscatter so far, even though it seems to be rather aggressive, many people report receiving

lots (even thousands) of copies.

Then on friday there was a new development as it was discovered that Sobig would contact 20 IP addresses at 1900 UTC,

presumably to receive new instructions/malicious code. 19 of the addresses were offline by this time, and the remaining

one immediately became heavily congested. So far, nobody has been able to determine what was supposed to happen,

but presumably, it didn't.

There was some discussion on the NANOG list about whether it's a good

idea to block TCP/UDP ports to help stop or slow down worms. The majority of those who posted their opinion on

the subject feel that the network shouldn't interfere with what users are doing, unless the network itself is at risk.

This means temporary filters when there is a really aggressive worm on the loose, but not permanently filtering

every possible vulnerable service. However, a sizable minority is in favor of this. But apart from philosophical

preferences, it makes little sense to do this as the more you filter, the bigger the impact for legitimate users,

and it has been well-established that worms manage to bypass filters and firewalls, presumably through VPNs

or because people bring in infected laptops and connect them to the internal network.

Something to look forward to: with IPv6, there are so many addresses (even in a single subnet) that simply generating

random addresses and see if there is a vulnerable host there isn't a usable approach. On the other hand,

I've already seen scans on a virtual WWW server which means that the scanning happened using the DNS name rather

than the server's IP address, so it's unlikely that the IPv6 internet will remain completely wormfree, even if things

won't be as bad as they're now in IPv4.

- RIPE 46 Intro (posted 2003-09-01)

RIPE 46, september 1 - 5, Amsterdam

Three times a year there is a RIPE meeting. Twice a year it's in the

Krasnapolsky hotel in Amsterdam, and one is elsewhere in Europe.

This week there is the RIPE 46 meeting,

once again in Amsterdam.

Note that if you can't attend, you can follow what's happening using the

experimental streaming service.

The streaming bandwidth

is around 225 kbps for the highest quality but you can fall back to lower

quality or audio only, I think. It works both with

Windows Mediaplayer and

Video Lan Client on my Mac.

There are also archives

of the streamed sessions.

The presentation slides

are also generally available.

- RIPE 46 Monday - EOF (posted 2003-09-01)

Monday

VoIP

On monday there were

talks about voice over IP

the whole day as part of the

European Operator Forum (EOF). I had somewhat mixed feelings about this. On

the one hand I'm pretty interested in VoIP, but I haven't done anything with

it in practice so I was expecting to learn a few things. Unfortunately, many

of the talks were way too detailed, explaining stuff like the old

electromechanical switching mechanisms in the phone network. There was also

lots of stuff on how to interconnect your VoIP stuff with the plain old

telephone system. This could/should be interesting but I found it

again too detailed. I guess I would have liked a smaller scale, more practical

approach on how to call over the net and not immediately focus on the POTS

network as I'm not going to get rid of my existing phone just yet.

SIP/H.323

But some cool stuff: I got to know a little bit more about SIP vs H.323.

Apps like Microsoft's Netmeeting use the ITU H.323 protocol family

as their signalling protocol, but today's products are more inclined towards

SIP, which is an IETF standard. Note that the actual voice packets are

governed by a host of other protocols. Usually, it's even possible to call

using an IP address without using SIP or H.323 on VoIP (Ethernet) phones.

But a SIP server/proxy provides all the features that usually come from a

PABX: implementing numbering plans, connecting to gateways, transferring

calls, putting calls on hold, that kind of thing.

There are now VoIP phones that cost about 60 - 75 dollars/euros and

there is the free Asterisk

"Open Source Linux PBX" software.

MPLS DoS traffic shunt

In the afternoon, there also was

a presentation about using an MPLS DoS traffic shunt.

(Also presented at NANOG.)

This is basically similar to what I talk about in my anti-

DoS article, but they use MPLS to backhaul the traffic to a location

where there is a Riverhead

anti-DoS filtering box and then push the traffic out to where it needs to go.

The MPLS paths are automatically created when the right iBGP routes are

present, but COLT (who implemented this) doesn't want it's customers to

automatically enable this, the NOC must create the iBGP routes for this

manually. Note that the reason this makes sense is that COLT has huge

amounts of bandwidth and around 60 locations where they interconnect with

other networks, but the Riverheads are way too expensive to buy 60 boxes.

- RIPE 46 Tuesday - EOF, geo aggregation, security BOF (posted 2003-09-01)

Tuesday

Sometimes everything seems like routing

It seems the IETF doesn't like to organize its meetings around San Francisco

because those meetings tend to be "zoos" because they attract too many

"local tourists". Well, a local tourist is what I kind of am at the RIPE meeting this

week. But unfortunately I'm not completely local: I take the train from

The Hague to Amsterdam each morning. Today, after the NSF Security BOF

(more on that below) I walked to the Amsterdam Central Station to take

the train back home. But there was a power outage in Leiden, so I've been

rerouted over Utrecht.

Obviously there is lots of congestion and increased delay, but so far

no commuter loss. So I'm typing this sitting on the floor of a hugely

overstuffed train, two hours after I got to the train

station, still no closer to home.

Anyway... More EOF

This morning's two sessions concluded the EOF part of this week's RIPE

meeting. First we heard from David Malone

from tcd.ie in Dublin, and in the second session

from Dave Wilson, his ISP, about their efforts to enable IPv6. Some good stuff: don't

use a stateless autoconfigured address (with a MAC address in it) for the

DNS you register with your favorite registries: you don't want to have to

change the delegation when you replace the NIC. And since there are more

than enough subnets, why not splurge on a dedicated /64 for this? Makes

it easy to move the primary/secondary DNS service around the network.

They also talked about possible multicasting problems with switches that

do ICMP snooping but are unaware of IPv6, and about next hop troubles when

exchanging IPv6 routes over a BGP session with IPv4 transport or the other

way around, but in both cases I think this is supposed to work, so I'll

look into this to see what the real story is. Next week, that is.

Geographical aggregation

During the routing working group session in the afternoon, I once again

presented my provider-internal geographical aggregation draft. (See

my presentations page for a link.) I have

added some stuff on why this is still useful even if we can have multihoming

using multiple addresses per host:

- We can deploy multihoming using geographical aggregation really fast,

because we start multihoming as soon as a suitable address assignment system

is operational, and clean up the routing table later in individual networks

by implementing the necessary filtering.

- Because optical (fiber path) switching is so cheap relative to layer

2 switching or routing (Cees de Laat of the University of Amsterdam says

it's even 100 : 10 : 1) it's entirely possible we'll see on-demand fiber

links. Routing obviously has to deal with this, and if we can aggregate

away the uninteresting stuff we're in much better shape for this.

- If a host gets several addresses with different geographical properties,

the host has the opportunity to do some elimentary source routing and use

the address with the shortest (or highest bandwidth) path.

Unfortunately, the room didn't seem all that receptive. Well, too bad.

I guess the routing crowd isn't ready for IPv6 stuff in general

and somewhat non-mainstream IPv6 stuff in particular.

NSP Security BOF

At six, there was the NSP Security Birds of a Feather session. It seems

there is a special mailinglist for operators who deal with denial of

service, but they keep membership limited to a few key people per ISP.

Obviously that means discussing who is allowed on the list and who is

kicked off takes a lot of time.

After that there was an hour-by-hour account from a Cisco incident response

guy (Damir Rajnovic) about the input queue locking up vulnerability that got out in june.

It seems they spend a lot of time keeping this under wraps. I'm not sure

if I'm very happy about this. One the one hand, it gives them time to

fix the bug, on the other hand our systems are vulnerable without us

knowing about it. They went public a day or so earlier than they wanted

because there were all kinds of rumours floating around. It turned out

that keeping it under wraps wasn't entirely a bad idea as there was an

exploit within the hour after the details got out. But I'm not a huge

fan of them telling only tier-1 ISPs that "people should stay at the

office" and then disclosing the information to them first. It seems to

me that announcing to everyone that there will be an announcement at some

time 12 or 24 hours in the future makes more sense. In the end they couldn't

keep the fact that something was going on under wraps anyway.

And large numbers of updated images with vague release notes also turned

out to be a big clue to people who pay attention to such things. One thing

was pretty good: it seems that Cisco itself is now working on reducing

the huge numbers of different images and feature sets, because this makes

testing hell.

- RIPE 46 Wednesday - Routing, IPv6 (posted 2003-09-16)

Wednesday

Routing

Wednesday brought sessions about my two favorite subjects: routing and IPv6. However, I didn't find most of the routing subjects very interesting: RIS Update, Verification of Zebra as a BGP Measurement Instrument, Comparative analysis of BGP update metrics. The last one sounds kind of interesting but it comes down to a long analysis of what you get when you compare BGP updates gathered at different locations such as the Amsterdam Internet Exchange looking glass and the Oregon Internet Exchange Route Views.

Yesterday's presentation in the routing wg about bidirectional forwarding detection (that I completely forgot about during all the train rerouting) was much more interesting. Daves Katz and Ward wrote an Internet Draft

draft-katz-ward-bfd-01.txt for a new protocol that makes it possible for routers to check whether the other side is still forwarding. This goes beyond the link keepalives that many protocols employ, because it also tests if there is any actual forwarding happening. And the protocol works for unidrectional links and to top it all off, it works at millisecond granularity. There is a lot of interest in this protocol, so there is considerable pressure to get it finished soon.

But wednesday's routing session wasn't a complete write-off as Pascal Gloor presented the

Netlantis Project. This is a collection of BGP tools. Especially the

Graphical AS Matrix Tool is pretty cool:

it shows you the interconnections between ASes. I'm not exactly sure how it decides which ASes to include,

but it still provides a nice overview.

IPv6

In the afternoon there was the IPv6 working group session which conflicted with the Technical Security working group

session which I would also have liked to attend...

Kurtis Lindqvist presented an

IETF multi6 wg update.

Gert Doering talked about the IPv6 routing table. Apart from the size, there are some notable differences with

IPv4: IPv6 BGP interconnection doesn't reflect business relationship or anything close to physical topology: people are still giving away free IPv6 transit and tunneling all over the place. This is getting better, though. (The problem with this is that you get lots of routes but no way to know in advance which are good. Nice to have free transit, not so nice when it's over a tunnel spanning the globe.) There are now nearly 500 entries in the global IPv6 table, which is nearly twice as much as two

years ago. About half of those are /32s from the RIRs (2001::/16 space), and the rest more or less equally distributed

over /35s from the RIRs and /24s, /28s and /32s from 6bone space (3ffe::/16).

I'm not sure if it was Gert, but someone remarked during a presentation: "In Asia, they run IPv6 for production.

In Europe, they run it for fun. In the US, they don't run it at all."

Jeroen Massar talked about "ghost busting". When the Regional Internet Registries started giving out IPv6 space, they

assigned /35s to ISPs. Later they changed this to /32s. The assignments were done in such a way that an ISP could

simply change their /35 announcement to a /32 announcement "in place". However, this is not entirely without its

problems as the BGP longest match first rule dictates that a longer prefix is always preferred (such as a /35 over a /32), regardless of the AS path length or other metrics. With everyone giving away free transit, there are huge amounts of potential longer paths that BGP will explore before the /35 finally disappears from the routing table and the /32 is used.

To add insult to injury, there appear to be bugs that make very long AS paths stay around when they should have disappeared. These are called "ghosts" so hence the ghost busting. See the

Ghost Route Hunter page for more information.

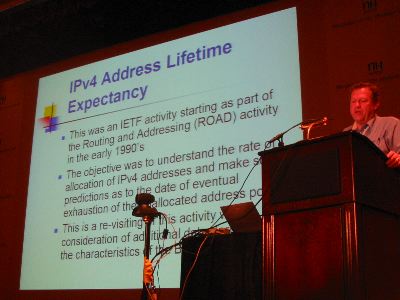

- IPv4 Address Lifetime Expectancy Revisited (posted 2003-09-16)

At the end of the thursday plenary at the RIPE 46 meeting, Geoff Huston presented

IPv4 Address Lifetime Expectancy Revisited (PDF).

If looking at the slides leaves you puzzled, have a look at one of Geoff's columns from a few months ago that (as always)

explains everything both in great detail and perfect clarity:

IPv4 - How long have we got?

(The full archive is available at http://www.potaroo.net/papers.html.)

Geoff looks at three steps in the address usage process:

- Allocation of a /8 from IANA to a Regional Internet Registry

- Assignment of address space from a RIR to someone who asks for it (usually an ISP)

- The addresses showing up in the global BGP routing table

It looks like the free IANA space is going to run out in 2019. But the RIRs hold a lot of address space in pools of their

own, if we include this the critical date becomes 2026. Initially, projections of BGP announcements would indicate that all the regularly available address space would be announced in 2027, and if we include the class E (240.0.0.0 and higher) address space a year later.

However, Geoff didn't stop there. After massaging the data, his conclusion was that the growth in BGP announcements doesn't seem exponential after all, but linear. With the surprising result:

"Re-introducing the held unannounced space into the routing

system over the coming years would extend this point by a

further decade, prolonging the useable lifetime of the

unallocated draw pool until 2038 –2045."

Now of course there are lots of disclaimers: whatever happened in the past isn't guaranteed to happen in the

future, that kind of thing. This goes double for the BGP data, as this extrapolation is only based on three years of data.

But still, many people were pretty shocked. It was a good thing this was the last presentation of the day, because

there were soon lines at the microphones.

So what gives? Ten years ago the projections indicated that the IPv4 address space would be depleted by 2005. Now,

an internet boom and large scale adoption of always-on internet access later, it isn't going to be another couple of years,

but four decades? Seems unlikely. Obviously CIDR, VLSM, NAT and ethernet switching that allows much larger subnets

have all slowed down address consumption. But I think there are some other factors that have been overlooked so far.

One of those is that some of the old assignments (such as entire class A networks) are being used up right now. For instance, AT&T Worldnet holds 12.0.0.0/8, but essentially this space is used much like a RIR block, ranges are further assigned to end-users. We're probably also seeing big blocks of address space disappearing from the global routing table because announcing such a block invites too much worm scanning traffic. On the other hand there are also reports from "ISPs" that assign private address space to their customers and use NAT. So I guess there is a margin of error in both directions.

The the same time, the argument can be made that for all intents and purposes the IPv4 address space has already

run out: it's way too hard to get the address space you need (let alone want). This is in line with what Alain Durand

and Christian Huitema explain in RFC 3194.

They argue that the logarithm of the number of actually used addresses

divided by the logarithm of the number of usable addresses

(the HD ratio) represents a pain level: below a ratio of 80% there is little or no pain, trouble starts at 85%

and 87% represents a practical maximum. For IPv4 that would be 211 million addresses used (note that in the RFC the

number is 240 million, but this is based on the full 32 bits while a little over an eighth of that isn't usable).

According to the latest Internet Domain Survey

we're now at 171 million. This is counting the number of hosts that have a name in the reverse DNS, so the real number

is probably higher.

I think RFC 3194 is on the right track but rather than simply do a log over the size of the address space, what we should

look at is the number and flexibility of aggregation boundaries. In the RFC phone numbers are cited.

Those have one aggregation boundary: area code vs local number, with a factor 10 flexibility. This means wasting a factor

10 (worst case) once. Classful IPv4 also had a single boundary, but the jumps are 8 bits, so a waste of a factor 256.

With classless IPv4 we have more boundaries, but they're only one bit most of the time: IANA->RIRs, RIR->ISPs,

ISP->customers and subnet. That's four times a factor two, so a factor 16 in total. That means we can use 3.7 billion

addresses / 16 = 231 million IPv4 addresses without pain. Hm... But we can collapse some boundaries to achieve

better utilization.

|

(advertisement)

(advertisement)

(advertisement)

(advertisement)